Is it reasonable to run two LED's in series and dispense with the resistor? Seems like it would keep the current down yet still light up the LED's to a reasonable level? Anyone try that yet?

Provided you choose the right LEDs, you can put 2 in series

with a suitable current limiting resistor.

When using a regulated supply, good results with 2 series LEDs and 1 series resistor are achievable. But when using a 5v regulated supply, you probably have a higher input voltage available and could use a buffer transistor and a chain of LEDs.

Note that high-brightnes LEDs usually drop more voltage when operating, so it may not be possible the put 2 of these in series with a 5v PICAXE supply.

The difficulty is determining the voltage drop and the current and therefore the resistance value to use. It depends on the supply voltage and type. If using batteries, then the voltage will drop during their life. If using 2 x 2.0v LEDs, you are left with less than 1v to drop across the resistor.

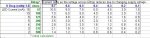

The attached jpeg snip from Excel highlights the problem. The first table calculates the size of dropping resistor when using 2 LEDs having a forward voltage of 2.0v+2.0v=4.0v and a 5.0v supply, against several desired LED currents. The second table shows what happens to the LED current as the supply voltage drops from 5.0v to 4.0v: as the batteries age.

Using the example of a desired current of 10mA with fresh batteries (assume 5.0v). As the the batteries age down to, say 4.2v, the current through the LEDs will have dropped to 2.0mA - a substantial reduction in LED brightness.